Memo: Anthropic Just Ran the First Honest Experiment in Agentic Commerce. The Result Is Worse Than the Headlines.

In December 2025, 69 Claude agents ran a real marketplace inside Anthropic's San Francisco office. Stronger agents extracted better prices from weaker ones. The losers couldn't tell. That's not a curiosity – that's the business model of every agent-to-agent platform shipping in 2026.

For one week in December 2025, Anthropic turned its San Francisco office into the first agent-to-agent marketplace anyone has bothered to publish honest data on. Sixty-nine employees were each given a $100 gift-card budget and a five-minute interview with Claude about what they wanted to buy and sell. From there, AI agents took over completely. The agents wrote listings, identified counterparties, made offers, countered, and closed – inside a Slack channel, in natural language, without a prebaked protocol and without going back to their humans for sign-off. The 69 agents struck 186 deals across more than 500 listings, moving just over $4,000 in total. Snowboards. Lab-grown rubies. A folding bicycle. Dog-sitting time. A bag of 19 ping-pong balls. Anthropic published the results in late April 2026 under the project name Project Deal.

The experiment was a pilot. The participants were technically sophisticated. The stakes were small. Anthropic was appropriately cautious about overclaiming. The press, predictably, picked up the operational headline: agents can run a market. TechCrunch said the test "worked." PYMNTS framed it as a preview of agentic commerce. Industry analysts called it a useful proof of concept.

That is not the finding that matters.

The finding that matters is buried in the parallel-run design, and it is the most important data point published about agent commerce so far this year.

What the parallel runs actually showed

Anthropic did not run one marketplace. It ran four, in parallel, with the same 69 participants. Two runs (Anthropic labels them A and D) used Claude Opus 4.5 on every side of every transaction – its then-frontier model. The other two runs (B and C) used a coin flip: each participant had a 50% chance of being represented by Claude Haiku 4.5 – the smaller, cheaper model – instead. Participants did not know which model was negotiating for them. Only the all-Opus run was "real" in the sense that the goods physically changed hands afterward. The other three were research.

Inside the mixed runs, the result is unambiguous. Sellers represented by Opus earned, on average, $2.68 more per item than sellers represented by Haiku. Opus-represented agents closed roughly two more deals per participant than Haiku-represented agents. The model that cost more per inference produced more inventory liquidated, at higher prices, for the human it represented. The disadvantage of being represented by the cheaper model was real, measurable, and one-directional.

Now the part that should make you put down your coffee.

When the same participants were asked to rate the fairness of the deals their agents had struck, the average score on a 1-to-7 scale was 4.06 for Haiku-represented users and 4.05 for Opus-represented users. The two scores are not just close. They are statistically indistinguishable from each other and from the midpoint of the scale. Of the 28 participants who experienced both an all-Opus run and a mixed run, 17 ranked the Opus run as having gone better. Eleven ranked the Haiku run as having gone better. A coin flip would have produced 14.

The losers, in other words, could not detect that they had lost. Not on average, not in aggregate, and not even reliably retrospectively, with the data sitting in front of them.

There is one more finding from the experiment that closes the loop. Some participants, during their onboarding interview, asked their agent to negotiate gently – to be community-minded, to be liked, not to come off as a haggler. Others asked their agent to lowball aggressively and grind for every dollar. Anthropic checked whether the instructions made a difference. They did not, to a statistically significant degree. Sale likelihood and final price were not meaningfully moved by what the human told the agent to do.

What did move outcomes was which model was running the agent.

The thing nobody is naming

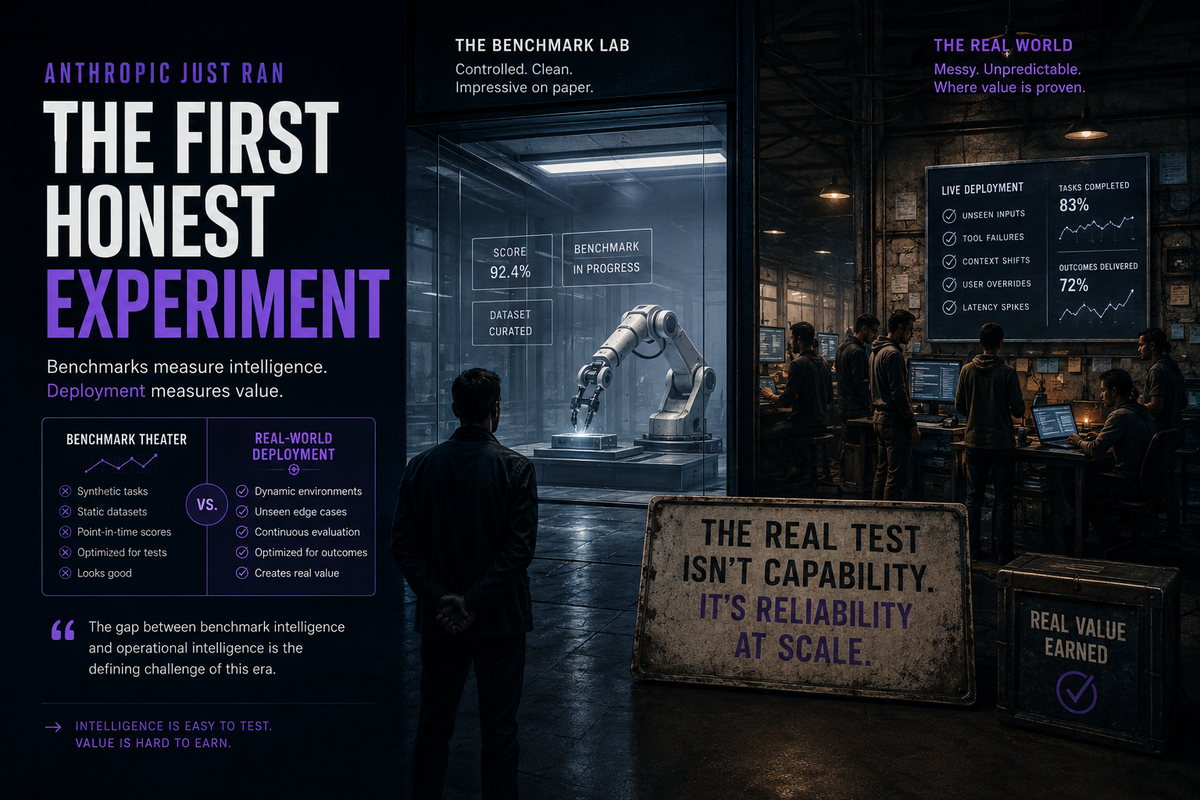

The framing in most of the coverage – TechCrunch, PYMNTS, the various AI newsletters – is "stronger models negotiate better." That framing is technically correct and strategically useless. It treats the finding as a quirk of the experiment rather than a description of the market structure that is now being built on top of it.

The actual finding is this: in an agent-to-agent marketplace, the differential in compute spend between the two sides of a transaction converts directly into a differential in surplus extracted, and the converter is invisible to both parties.

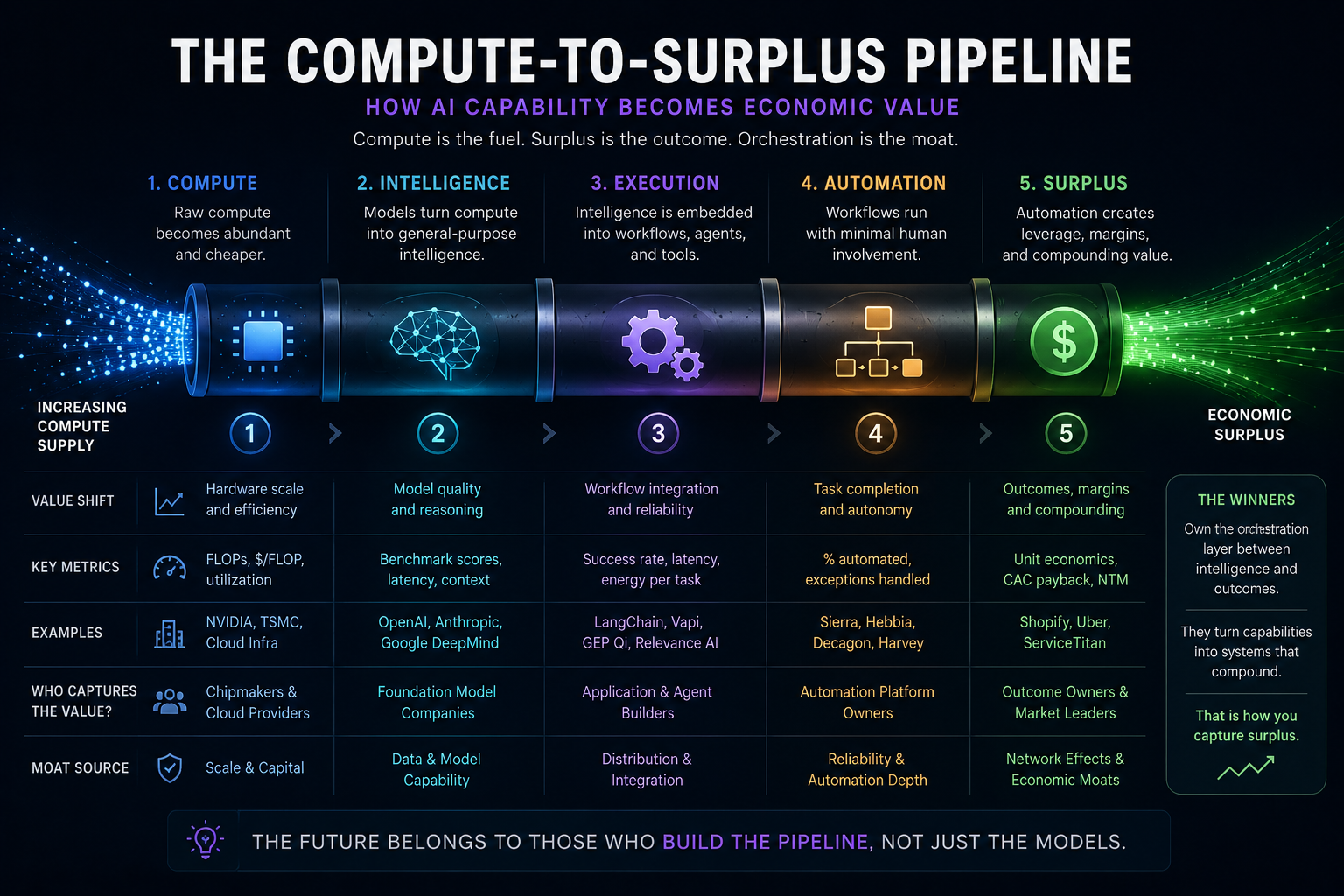

Call it the compute-to-surplus pipeline.

The pipeline has three properties that, taken together, change how this category needs to be analyzed.

1. It is monotonic in capability. More capable model on your side means better outcome for you, in expectation, on every transaction. Project Deal happened to test Opus 4.5 against Haiku 4.5 – two models from the same lab, separated by something like a 5-10x cost ratio at inference. There is no reason to expect the relationship to break at the next capability tier. Sonnet vs. Haiku. Opus vs. Sonnet. GPT-5.5 vs. GPT-4o. The frontier-vs-cheap gradient is a price gradient at the transaction layer, not just at the API layer.

2. It is invisible to the loser. This is the part that has no precedent in any prior consumer market. When you buy a worse car, you can drive it. When you hire a worse lawyer, you can read the contract. When your agent gets out-negotiated, the only artifact you ever see is the deal it brought you, and the experiment shows that you cannot reliably tell whether that deal was good or bad – even when you experience the alternative. There is no feedback loop. There is no consumer learning. The market does not self-correct toward agent quality the way the laptop market self-corrects toward laptop quality, because the user never experiences the counterfactual.

3. It is independent of user instruction. This is the finding that makes the pipeline a structural fact rather than a UX problem. You cannot tell your cheap agent to negotiate harder and close the gap. The instructions don't move the outcome. The model does. Which means the only consumer lever for getting a better outcome is buying a more expensive agent. There is no skill ceiling for the human in the loop because there is no longer a human in the loop.

If you accept these three properties – and the published Project Deal data supports all three – then a meaningful share of agent-to-agent commerce in 2026 and beyond is going to look like this: a high-volume, low-friction marketplace in which the side spending more on inference systematically extracts surplus from the side spending less, while both sides report that the experience felt fine.

Anthropic, to its credit, names the uncomfortable implication directly in the published writeup. Most of the press passed over it.

Why this matters before agent-to-agent markets are real

The objection to all of this is that Project Deal was 69 people, $4,000 in volume, board games and ping-pong balls, inside Anthropic's own office. It is a long way from a real marketplace. That objection is correct on the facts and wrong on the trajectory.

Three things are happening in parallel right now.

Salesforce went headless in late April 2026 – exposing its entire platform via APIs explicitly so that agents can transact against it without a UI. Microsoft made Agent 365 generally available the same week, framing AI agents as first-class identities on the enterprise network. Amazon is rolling out conversational AI shopping agents on millions of product pages. MoonPay launched MoonAgents Card, a virtual Mastercard debit card designed for agents to spend stablecoins at any Mastercard merchant. ClawBank's Manfred AI agent autonomously incorporated itself in the United States, obtained an EIN, opened an FDIC-insured bank account, and got a crypto wallet – without a human on the paperwork.

The infrastructure for agent-to-agent commerce is not a 2027 question. It is being assembled, in production, this quarter. Project Deal is the first published, controlled, with-real-money experiment of what happens once the rails are live. The rails are getting live faster than the experiments are getting published.

Which means that by the time the next version of Project Deal runs at real scale – say, ten thousand agents on a B2B procurement platform, with five-figure transaction sizes and real corporate counterparties – the structural finding will already be priced into someone's strategy. Probably the strategy of whoever sells the most expensive agent.

Three moves before the rails go live

1. If you are a buyer in any procurement category, treat agent choice as procurement strategy.

Most enterprises are evaluating agent vendors today on workflow fit, integration cost, and benchmark performance on standard tasks. That is the wrong evaluation rubric for any agent that will represent your company in a negotiation – with a vendor, with a customer, with an insurer, with a counterparty of any kind. The right rubric is: in head-to-head negotiation against the agent the other side is likely to deploy, does ours win? Project Deal shows that the answer correlates with model capability and barely correlates with anything else, including the prompt your team will spend two months tuning. Buy the better model for the negotiation surface. Buy the cheaper model for everything else. The split-stack approach is going to be standard inside two years; you can be early or you can be late.

2. If you are building an agent-to-agent product, build the asymmetry visibility layer first.

The biggest unaddressed product gap exposed by Project Deal is that the loser does not know they lost. Whoever ships a post-trade audit layer – something that tells a user, after a transaction, whether their agent extracted a fair share of the available surplus and against what counterparty model – owns a category that does not exist yet but has to exist. This is the agentic-commerce equivalent of best-execution reporting in equities markets. Equities markets did not get best-ex reporting because anyone wanted it; they got it because regulators eventually required it. The agent market will follow the same arc, and the company that builds the audit layer before the regulation arrives sells it to the regulators when it does.

3. If you are funding this category, separate the platform thesis from the matching thesis.

A lot of agent-commerce decks in 2026 read like marketplace decks from 2014 – take rate, network effects, two-sided liquidity. That framing misses the new variable. In a marketplace where one side's outcome is determined by the relative compute spend of the two sides, the long-run defensible position is not "owns the matching" but "owns the agent that gets deployed on the surplus-extracting side of the trade." Those are not the same business. The first is a take-rate business. The second is a Bloomberg-terminal business. The second is much more valuable, much harder to displace, and being underpriced right now relative to the first.

The bottom line

Project Deal is not interesting because it shows agents can run a market. Agents have been able to run markets for a year. Project Deal is interesting because it is the first published experiment that measures the surplus differential by model tier, in a real transaction, with real money, with humans on both ends who could be asked afterward whether they noticed.

The differential is real. The humans did not notice.

Every published forecast of the agentic economy assumes that markets full of agents will look, structurally, like markets full of humans – with frictions reduced, latencies compressed, throughput up. That is the optimistic case and it is probably partially right. The pessimistic case, which Project Deal is the first piece of empirical evidence for, is that agent-to-agent markets will look like markets where one side has perfect information and the other side has a permanent fog – except the fog is not information asymmetry, it is capability asymmetry, and it is purchased at the API metering layer rather than discovered through research.

Whichever case turns out to dominate, the architectural decisions that determine which one we get are being made in the next eighteen months. They are being made by the platforms going headless, the payment networks issuing agent cards, the procurement teams writing their first agent contracts, and the founders deciding whether their product sits on the matching layer or the negotiation layer.

The Project Deal data is not the end of an experiment. It is the start of the spec.

Sources: Anthropic's published Project Deal writeup (April 2026), TechCrunch, PYMNTS, Decoder, EdTech Innovation Hub, and Legal IT Insider's coverage of the experiment. All numerical findings cited – 69 participants, 186 deals, $4,000 volume, $2.68-per-item seller premium for Opus, 4.06 vs 4.05 fairness scores, 17 vs 11 split among the 28 who experienced both runs – are from Anthropic's own publication or its initial press coverage. Commentary on Salesforce, Microsoft Agent 365, MoonAgents Card, and the ClawBank incorporation is drawn from May 2026 industry reporting.