Memo: Klarna Fired 700 Agents, Then Hired Them Back, Then Saved $60M Anyway. What Actually Happened.

Two and a half years after Klarna replaced 700 customer service agents with an OpenAI-powered assistant, the company is hiring humans again – and quietly building the architecture every enterprise will need next.

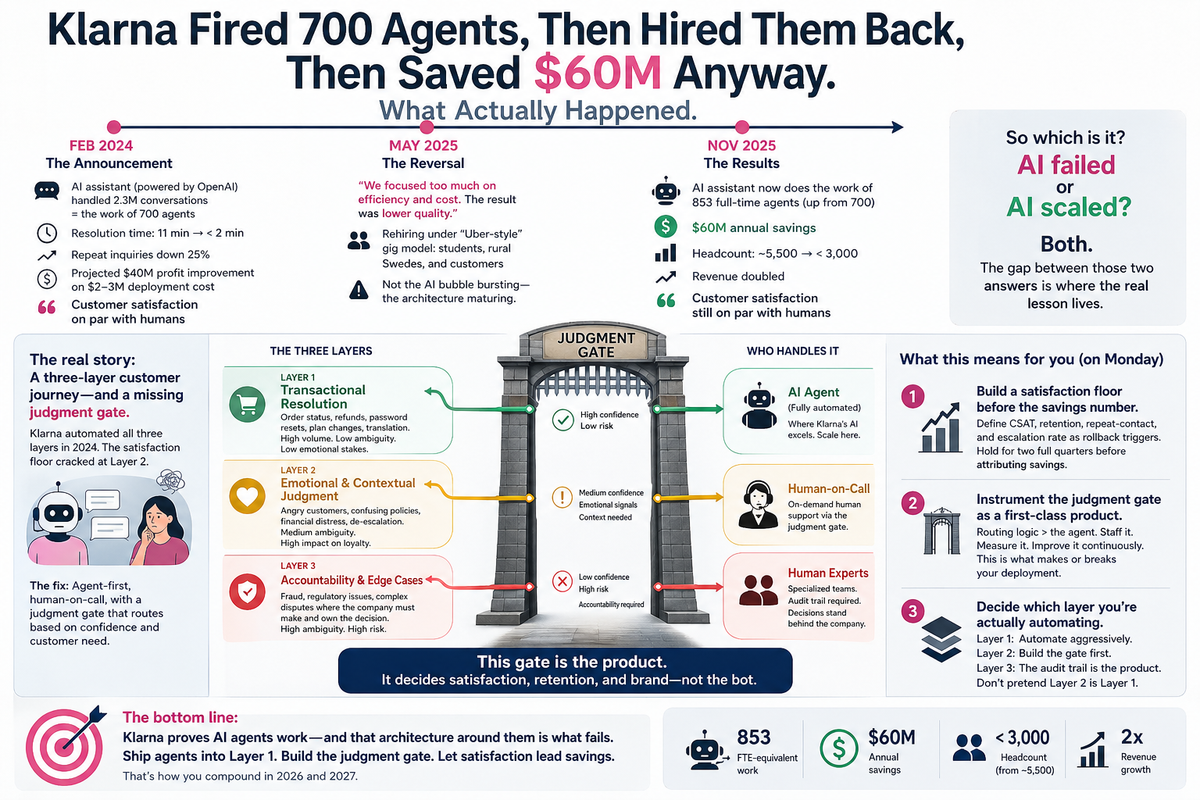

In February 2024, Klarna told the world it had done what almost everyone else was still talking about. Its AI assistant, built with OpenAI, had handled 2.3 million customer service conversations in its first month – the workload of 700 full-time agents – across 35-plus languages, and the company projected a $40 million profit improvement on a deployment cost of $2–3 million. Resolution times had collapsed from 11 minutes to under 2. Repeat inquiries fell 25%. CEO Sebastian Siemiatkowski stood up and said customer satisfaction was on par with humans.

Every CFO with an AI line item read that announcement. Some of you forwarded it to your board.

In May 2025, Klarna walked it back. Siemiatkowski told Bloomberg the company had "focused too much on efficiency and cost," that the result was "lower quality," and that Klarna was rehiring – though under an "Uber-style" gig model, with shifts staffed by students, rural Swedes, and Klarna's own customers. The press read this as the AI bubble's first big enterprise reversal. That's the wrong reading.

By November 2025, on Klarna's first earnings call as a public company, Siemiatkowski said the AI assistant was now doing the work of 853 full-time agents – up from 700 – saving $60 million annually, while the company had cut headcount from roughly 5,500 to under 3,000 and doubled revenue. Customer satisfaction, he said, was still on par with humans.

So which is it? Did the AI fail or did it scale?

Both. And the gap between those two answers is the most important thing happening in enterprise AI right now. It is also what the copilot-vs-agents debate has been getting wrong.

What actually broke

The mistake at Klarna was not technical. The chatbot worked. The metric that made the headlines – "the work of 700 agents" – was real. Resolution times were real. The $40M projection was real.

What was not measured, in Q1 2024, was the lag.

Customer satisfaction in a support function is what economists call a lagging indicator. The signals it produces in week one of an AI rollout look almost identical to the signals it produced in the last quarter under human staff. It takes 6–12 months for satisfaction to meaningfully decline, and another 6 for those declines to correlate with churn, retention, and brand impact. Klarna shipped the press release in month two. By the time the satisfaction floor cracked, the savings were already booked, the layoffs were already done, and the IPO narrative had been built on top of the numbers.

This is the part the original Klarna case study buried, and the part most "agents are the future" essays still skip: the failure mode of an AI customer service rollout is invisible for the first two quarters of the rollout, and irreversible by the fourth.

When Forrester analyst Kate Leggett wrote later that Klarna "overpivoted to cost containment without thinking about the longer-term impact of customer experience," she was being polite. The actual structural error was that Klarna's measurement architecture had no way to distinguish "the bot answered the question" from "the customer left feeling like they could trust this company with their money next time." The first is throughput. The second is the entire business. Klarna's AI was excellent at the first and structurally blind to the second.

That blindness is not a Klarna problem. It is the default state of almost every AI rollout shipping in 2026.

Why "copilots vs. agents" is the wrong frame

Most of the industry conversation right now sorts AI deployments into two buckets: copilots (AI assists a human) and agents (AI executes autonomously). The implied arc is that copilots are the awkward present and agents are the inevitable future.

Klarna shows the arc is wrong. Klarna's chatbot was already an agent in 2024 – it executed end-to-end, it resolved tickets without a human in the loop, it handled refund flows, it generated $60M/year in standalone savings. The reversal in May 2025 was not "we should have built a copilot instead." The reversal was: we built a one-layer agent and the customer journey has at least three layers of judgment, and we automated all three.

The three layers, made visible by Klarna's own reversal:

Layer 1 – Transactional resolution. Order status, refund issuance, password resets, plan changes, language translation. High volume, low ambiguity, low emotional stakes. Klarna's AI excelled here. The 853-FTE-equivalent number is real, and as of late 2025 Klarna is doubling down on it. This layer should be fully automated. The savings are durable.

Layer 2 – Emotional and contextual judgment. A customer is angry but trying not to show it. A customer is technically wrong about their refund eligibility but is right that the policy is confusing. A customer is in financial distress and the "correct" answer would push them further in. This is where Klarna eliminated judgment entirely in 2024 and where the satisfaction floor cracked. It is also the layer where vendors selling "agents" today are quietly weakest, because LLM benchmarks do not measure de-escalation.

Layer 3 – Edge cases that require accountability. Fraud disputes, regulatory escalations, situations where the company has to make a real decision and stand behind it. Klarna never seriously tried to automate this, but a lot of competitors have, and most are about to learn the same lesson 12 months behind Klarna.

The Klarna reversal was not a retreat from agents. It was a forced unbundling of the three layers. The "Uber-style" rehiring is not a return to human-staffed support. It is the construction of an on-demand human layer that gets called only when the agent at Layer 1 hits a confidence threshold or detects emotional content. The architecture is now: agent-first, human-on-call, with the routing logic between them as the actual product.

That routing logic – call it the judgment gate – is the thing nobody is shipping yet, and it is where the next two years of enterprise AI engineering will actually happen.

What this means for you on Monday morning

If you are evaluating an AI deployment in any function with an external customer touchpoint – support, sales, claims, onboarding, collections – Klarna's full arc, not its 2024 highlight reel, is the case study that matters. Three concrete moves.

1. Build a satisfaction floor before you ship the savings number.

Klarna's structural error was that the savings story was public in month two and the satisfaction story was unmeasured until month fifteen. Reverse the order. Define a satisfaction floor – CSAT, retention cohort behavior, repeat-contact rate, escalation-to-human rate as a leading signal – and commit to it as a rollback trigger before the press release goes out. If satisfaction holds for two full quarters, then attribute savings. If it drops, the savings were borrowed from future revenue, and you need to know that before the market does.

2. Instrument the judgment gate as a first-class product.

Most teams shipping agents in 2026 are treating "escalation to human" as a fallback – a sad path, a failure mode. Klarna's reversal proves it is the product. The routing logic that decides when an agent answers, when a human answers, and when the agent answers but a human reviews, is what determines whether your rollout survives contact with reality. Staff it accordingly. The senior engineer on your AI deployment should not be the one who built the agent. It should be the one who builds the gate.

3. Decide which layer you are actually automating.

Before the next sprint, write down – for the workflow you are about to ship – which of the three layers it touches. If it touches Layer 1 only (transactional), proceed. If it touches Layer 2 (emotional/contextual), build the gate first and ship the agent second. If it touches Layer 3 (accountability), the agent is not the product; the audit trail is. Most teams are shipping into Layer 2 and pretending it is Layer 1, because the demos look identical.

The bottom line

Klarna did not prove that AI agents do not work. It proved that agents work fine, and the architecture around them is what fails. The November 2025 numbers – 853 FTEs of equivalent work, $60M in savings, doubled revenue on half the headcount – are the strongest enterprise AI result on the public record. The May 2025 reversal is the strongest enterprise AI cautionary tale on the public record. They are the same deployment.

The companies that internalize that – that ship agents into Layer 1 aggressively, instrument the judgment gate as a real product, and resist the temptation to publish the savings number before the satisfaction lag closes – will compound through 2026 and 2027. The companies that ship Klarna's 2024 announcement without reading Klarna's 2025 retraction will spend 2027 rebuilding their human layer at twice the cost.

Copilots were never the alternative to agents. The alternative to a one-layer agent is a three-layer system with a judgment gate in the middle.

That is what comes after.

Sources: Klarna press releases (Feb 2024, Nov 2025), Bloomberg interviews with Sebastian Siemiatkowski (May 2025), Forrester commentary by Kate Leggett, Q3 2025 Klarna earnings call, and reporting in Computer Weekly, CX Dive, and Fortune. All figures cited are from publicly reported statements by Klarna or its CEO.